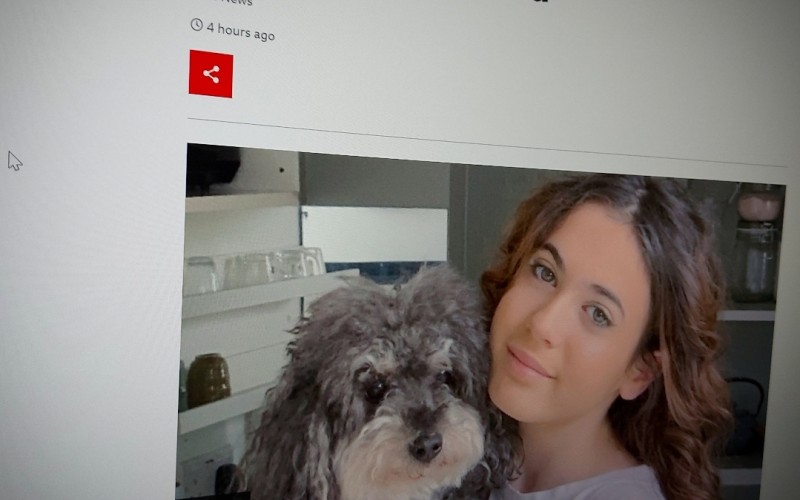

A father whose 14-year-old daughter killed herself is calling for parents to be able to access their children's social media after they die.

Mariano Janin believes his daughter Mia saw bullying messages on her phone the night before her death in March 2021.

He wants parents to be able to access any messages or videos a child may have seen on social media before they died.

Comments

make a comment